Custom AI development.

Pragmatic, secure, built for ROI

We provide AI software development services, securely connecting modern LLMs to legacy software, automating complex workflows, and delivering measurable business outcomes through our structured Agentic Development Lifecycle (ADLC).

AI development services structured by ROI tiers

Choosing the right AI tier depends on your current constraints, including budget, data readiness, compliance exposure, and operational complexity. We structure AI initiatives around these tiers, evaluating risk, ROI, and production feasibility.

Tier 1: AI readiness & consulting

We validate your business goals before you invest in development. Before building anything, we evaluate whether AI is economically justified for your use case.

We audit:

- Data availability and quality.

- Infrastructure and integration constraints.

- Security and compliance exposure.

- Operational workflow impact.

- Projected token consumption and cloud costs.

Tier 2: RAG systems & copilots

We securely connect AI to your business knowledge. This is where most companies begin production AI. We build retrieval-augmented generation (RAG) systems and copilots that:

- Connect to internal documents, ERP, CRM, and knowledge bases.

- Operate inside VPC-isolated infrastructure.

- Restrict responses to verified data sources.

- Provide citations and traceability.

- Enforce role-based access control at the data layer.

This tier converts static knowledge into operational intelligence without training public models.

Tier 3: Agentic workflows

This tier is for companies ready to automate complex, cross-department processes. When AI moves beyond answering questions and begins executing workflows, orchestration becomes critical. Each workflow is governed through evaluation pipelines, adversarial testing, and cost simulations before full deployment. We design multi-agent systems that:

- Retrieve data.

- Reason over business rules.

- Interact with APIs.

- Trigger downstream actions.

- Escalate to humans when confidence thresholds drop.

Tier 4: Custom AI models development

This tier is typically used by organizations processing large data volumes or operating in regulated environments. For these organizations, we design and deploy private model strategies. This includes:

- Fine-tuning SLMs and LLMs.

- Domain-specific adaptation.

- Private model hosting (AWS – Azure – on-prem).

- Hybrid model routing.

- Token cost optimization.

AI that delivers business value

Contact us and get a roadmap tailored to your needs.

AI pilot & prove program

Our pilot & prove program is a structured 4-6 week engagement designed to validate technical feasibility, operational readiness, and economic viability before full deployment. Instead of experimenting in isolation, we build a secure, production-realistic AI environment using a controlled slice of your actual data and infrastructure.

What we build

Inside a VPC-isolated sandbox, we:

- Connect AI to your internal systems through secure middleware.

- Implement role-based access controls at the retrieval level.

- Configure deterministic RAG architecture for source-grounded responses.

- Establish evaluation benchmarks for accuracy and consistency.

- Simulate real user workflows.

- Model projected monthly token consumption under realistic usage.

You see how the system performs under real operating conditions.

What you get

At the end of the pilot & prove phase, you receive:

- A validated technical architecture blueprint.

- Documented security and governance controls.

- Measured retrieval accuracy and response benchmarks.

- A production token consumption forecast.

- A defined rollout roadmap with cost projections.

- A clear investment model for scaling.

Your leadership team can evaluate the initiative using structured data, projected costs, and measurable outcomes.

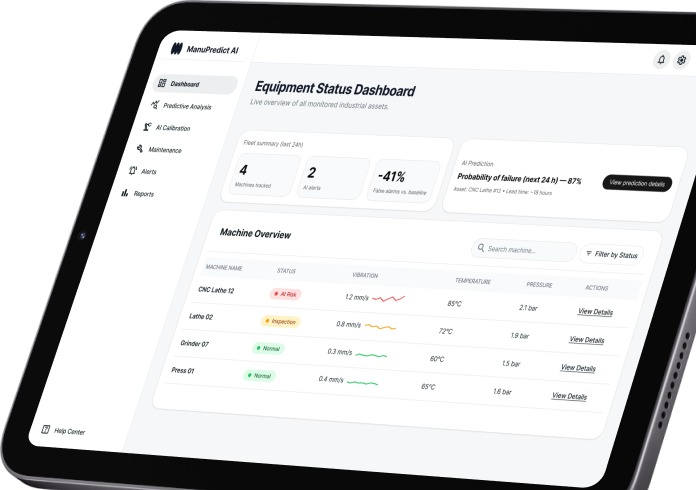

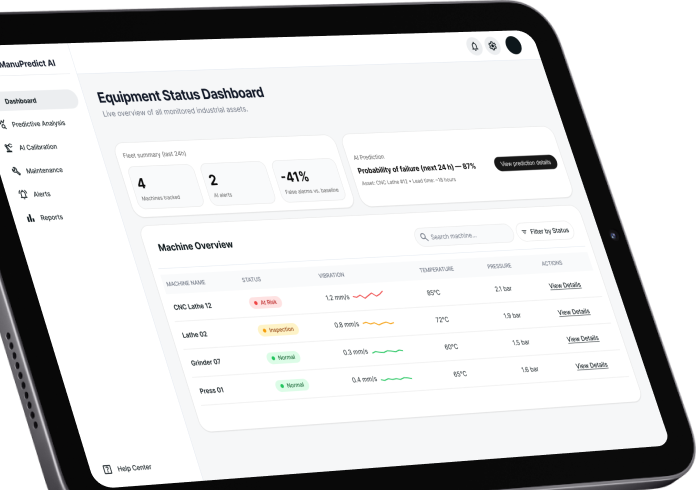

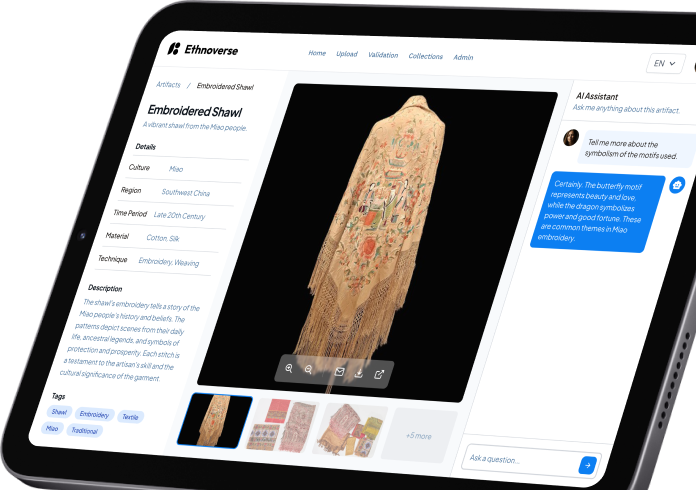

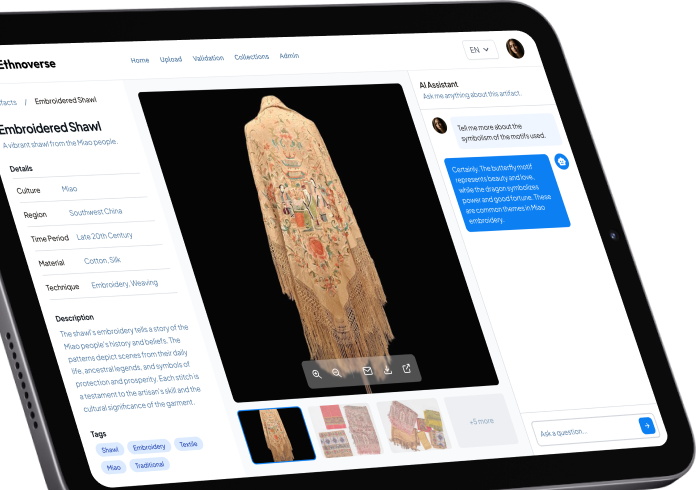

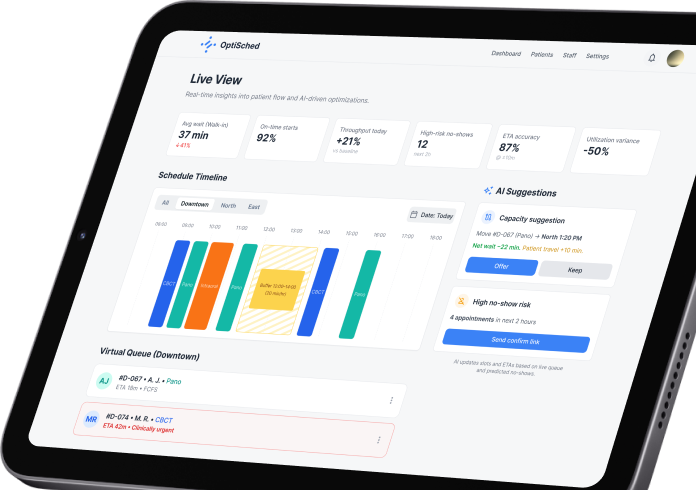

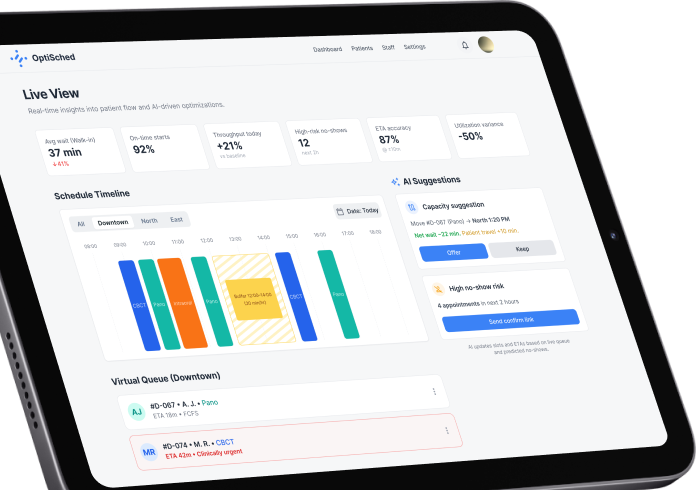

Our recent AI software

Unlock AI-powered growth

Start your journey with custom AI solutions built for business impact.

We speak both languages: legacy systems & modern AI

AI succeeds when its intelligence and classic software infrastructure work together. We use your mature system of internal tools and software that has grown over the years, and introduce a new, intelligent AI level into this environment. We can do this because we have been developing traditional software for over 13 years, so we operate as a dual-engine firm.

Engineering probabilistic AI: the ADLC

Traditional software follows deterministic logic. If a condition is met, a predefined action occurs. AI systems generate responses based on probability, context, and learned patterns. This difference requires a distinct engineering discipline called the agentic development lifecycle (ADLC).

We begin by defining the operational objective before selecting models. We formalize:

- The workflow to enhance.

- The measurable business outcome.

- The decision layer to augment.

At this stage, we also map the data landscape:

- Which internal systems contain the required knowledge.

- How information flows across departments.

- Where structured and unstructured data must be connected.

AI is aligned with a clearly defined performance target from day one.

Probabilistic systems require explicit boundaries, which are called Guardrails. We define:

- Approved knowledge sources.

- Data access pathways.

- Permission enforcement logic.

- Conditions under which the system must decline to respond.

Guardrails are embedded directly into the architecture. The system integrates into ERP, CRM, data warehouses, and internal tools with full logging and audit visibility.

Before broader exposure, system behavior is measured. We introduce structured evaluation pipelines, including RAG evaluation frameworks, to assess:

- Faithfulness to source material.

- Retrieval precision.

- Response consistency.

- Accuracy against defined thresholds.

Performance is scored before release.

Expansion follows validated benchmarks.

Production environments introduce scale, concurrency, and edge cases. We conduct structured red-teaming and prompt injection simulations to evaluate system resilience. We validate:

- Stability under load.

- Cost per interaction consistency.

- Cross-system impact.

- Behavioral integrity under diverse query patterns.

The system proceeds to production once reliability is demonstrated under realistic operational conditions.

Once live, structured oversight remains in place. We maintain visibility into:

- Response quality.

- Data freshness.

- Usage dynamics.

- Cost efficiency.

Adjustments are documented, controlled, and aligned with evolving business needs. The system matures deliberately, with measurable performance over time.

Security, compliance & certifications

AI systems must operate inside clearly defined technical, legal, and operational boundaries. Our engineering approach embeds these boundaries directly into the system architecture.

Infrastructure-level security

AI solutions are deployed inside your controlled cloud environment – AWS or Azure – using private networking, isolated workloads, and encrypted data flows. Access is structured through granular role-based permissions aligned with your internal policies.

Data governance by design

We define how data is ingested, processed, stored, and accessed before model integration begins. Sensitive information can be automatically redacted or masked, and access policies are enforced at both application and retrieval levels.

Compliance alignment

Our delivery processes align with ISO 27001 standards and support regulatory frameworks such as GDPR and the EU AI Act where applicable. Documentation, audit trails, and model lifecycle transparency are incorporated into the development process.

Operational oversight

Monitoring, logging, and performance tracking are integrated from day one. This ensures traceability of model outputs, system behavior, and infrastructure usage across environments.

Infrastructure-level security

AI solutions are deployed inside your controlled cloud environment – AWS or Azure – using private networking, isolated workloads, and encrypted data flows. Access is structured through granular role-based permissions aligned with your internal policies.

Data governance by design

We define how data is ingested, processed, stored, and accessed before model integration begins. Sensitive information can be automatically redacted or masked, and access policies are enforced at both application and retrieval levels.

Compliance alignment

Our delivery processes align with ISO 27001 standards and support regulatory frameworks such as GDPR and the EU AI Act where applicable. Documentation, audit trails, and model lifecycle transparency are incorporated into the development process.

Operational oversight

Monitoring, logging, and performance tracking are integrated from day one. This ensures traceability of model outputs, system behavior, and infrastructure usage across environments.

Custom AI, real results

Book a meeting and see what’s possible with our team.

Enhance your business with industry-focused AI software

With a proven track record of artificial intelligence development services in 20+ industries, we deliver top-tier industry-focused custom AI software for new and established businesses. We can offer our expertise in big data analysis, AI development, and machine learning, and we have already brought business value to more than 350 companies across the globe.

Banking and finance

- trading solutions;

- advisory services;

- customer service automation;

- personalization solutions;

- pattern recognition and fraud prevention.

Sales & marketing

- predictive and prescriptive analytics;

- content curation solutions;

- AI and machine learning-enabled attribution;

- cross-channels personalized interactions;

- personalized and scaling messaging.

Healthcare

- nursing assistants development;

- AI-assisted consultation, diagnosis & treatment;

- managing Medical records and Other Data;

- health Monitoring;

- healthcare System Analysis.

Education

- differentiated and individualized learning;

- AI tutoring;

- smart content;

- global learning;

- automation of admin tasks.

Logistics & Transportation

- AI-driven route optimization;

- predictive fleet maintenance;

- intelligent demand forecasting;

- real-time tracking and visibility;

- automated logistics management

Retail

- personalized product recommendations;

- inventory forecasting and management;

- dynamic pricing optimization;

- customer behavior analytics;

- AI-powered virtual assistants and chatbots.

Manufacturing

- predictive maintenance and asset optimization;

- AI-powered quality inspection and defect detection;

- robotic process automation (RPA);

- supply-chain forecasting and planning;

- automated quality assurance.

Ecommerce

- AI-assisted search;

speech recognition services; - relevant offers for buyers

virtual agents and intelligent automation tools; - personalized shopping experience.

Why SumatoSoft: our pragmatic guarantees

We approach every engagement with engineering discipline and commercial responsibility. We do not treat AI as a universal fix. We evaluate your architecture, data maturity, risk exposure, and cost structure before recommending where intelligence belongs and where it does not.

We will not bolt an LLM directly to your core database.

We design secure middleware and API abstraction layers that protect your legacy systems from instability, latency, and injection risks.

We will not use your proprietary data to train public models.

Your data stays inside your controlled infrastructure. We deploy AI within secure VPC environments with strict access controls and auditability.

We will not push AI where it does not create business value.

If a deterministic SDLC solution delivers the required result faster and at lower cost, we recommend it. We are dual-engine engineers. We build intelligence that generates ROI and structured software that ensures stability.

Turn data into action

Schedule a call and discover how AI can work for you.

Some AI tech stack we work with

Here is just a few tools we use for AI software development. Final choice depends on your specific business goals.

Your data never trains public models

Enterprise AI requires architectural clarity. Your proprietary information remains fully under your control at every stage of development and deployment.

VPC-isolated deployment architecture

We design AI systems inside your private cloud environment – AWS or Azure – using VPC isolation, private subnets, security groups, and IAM policies. Models operate within your security perimeter and connect to internal systems through controlled middleware instead of direct database exposure.

Vector-level role-based access control (RBAC)

Access is governed at the retrieval layer. We implement vector-level RBAC and attribute-based access control (ABAC) so users can query and retrieve only the data they are authorized to access. Permissions are enforced before retrieval and generation occur.

Automated PII redaction pipeline

Sensitive data is processed through automated PII detection and redaction pipelines before indexing or model interaction. We apply entity recognition, masking, and tokenization techniques to ensure protected data remains isolated from unintended system layers.

Private model invocation and secure API mediation

Foundational models are accessed via secure API gateways or deployed in private endpoints. All model calls are logged, rate-limited, and governed through middleware orchestration within your infrastructure.

Audit logging and access traceability

Every interaction – retrieval, generation, and system call – is logged and traceable. Structured audit trails provide operational transparency and support internal compliance requirements.

- Your data remains inside your infrastructure.

- Your permissions define access.

- Your governance defines control.

Frequently asked questions

How much does enterprise AI development cost?

Investment depends on scope, data complexity, integration depth, and infrastructure requirements. Structured pilots typically define clear cost boundaries early, including projected cloud and token usage. Full production systems are scoped after architectural validation to ensure financial predictability.

How long does it take to move from idea to production?

A focused pilot can be delivered within weeks. Production-grade systems follow after validation, integration planning, and performance evaluation. Timelines scale with system complexity and number of integrations.

Will our proprietary data be used to train public AI models?

No. Enterprise deployments operate within private infrastructure. Your data remains isolated and is never used to train external foundational models.

Can AI integrate with our legacy systems?

Yes. Integration is engineered through secure APIs, middleware, and structured data pipelines. AI components are embedded into your existing workflows without disrupting core systems.

How do you ensure model quality and reliability?

Every AI system is evaluated against defined performance metrics before release. Continuous monitoring, structured testing, and controlled iteration maintain output quality over time.

Let’s start

If you have any questions, email us info@sumatosoft.com